evolve or perish

LEGEND

VJ/DJ PRIVATE EYES NYC

4AD / BEGGARS BANQUET (VIDEO)

COLUMBIA RECORDS (MUSIC VIDEO)

SONY MUSIC (SVP, FIRST DIGTAL EXEC)

WIREDSET/TRENDRR (CEO, acquired by …)

TWITTER (GM CURATOR)

ANGEL/MENTOR/CONSUT/CREATE/TEACH

ACTIVIST

Mark Ghuneim is an American internet entrepreneur, activist, and art curator with a multifaceted career spanning the music industry, digital marketing, privacy advocacy, and art curation. He is known for his innovative contributions to each of these fields and his passion for exploring the intersection of technology, privacy, and art.

Career: Ghuneim’s early career began in the music industry, where he served as a VJ and DJ at Private Eyes, a video nightclub in New York City. He also gained experience in music video production while working with Thirsty Ear/Beggar’s Banquet and record labels like 4AD. He later joined Columbia Records, holding various positions including VP of Video Promotion, VP of Online and Emerging Technologies, and Senior VP of Online and Emerging Technologies. As the first digital executive at Sony Music Entertainment, Ghuneim implemented online marketing campaigns and spearheaded innovations in social networking and community management.

In 2004, Ghuneim founded Wiredset, a digital services company that provided marketing and consulting services to clients in the entertainment industry. Wiredset later developed Trendrr, a real-time tracking and analysis platform for social media engagement and trends. Trendrr became a success and was acquired by Twitter in 2013. Ghuneim then became the General Manager of Twitter’s Curator product, a tool for curating tweets and other content for use in media broadcasts and publications. He also worked on business development and media partnerships for Twitter.

Advocacy and Art: Ghuneim founded Hacked.net in 1997, a platform focused on information security, and he testified before Congress on related issues. He actively worked with the ACLU and the New York Civil Liberties Union to advocate for privacy rights and oppose surveillance in public spaces. As an art curator, Ghuneim co-curated the exhibition “Public, Private, Secret” at the International Center of Photography (ICP) in New York from 2016 to 2017. The exhibition explored the roles of photography and video in shaping identity and the social conventions that define public and private selves.

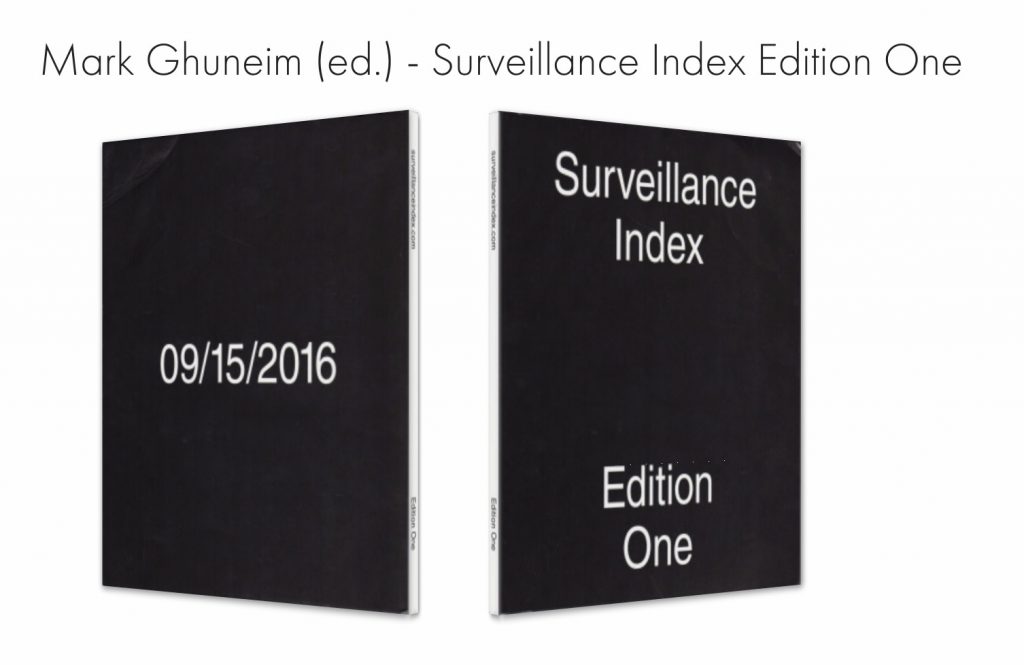

He also authored, co-curated, and contributed to the “Surveillance Index” exhibition and book series, which presented a collection of books related to surveillance photography, with the first edition launched in 2016 at the ICP Museum1 Book Launch: Surveillance Index Edition One by Mark Ghuneim

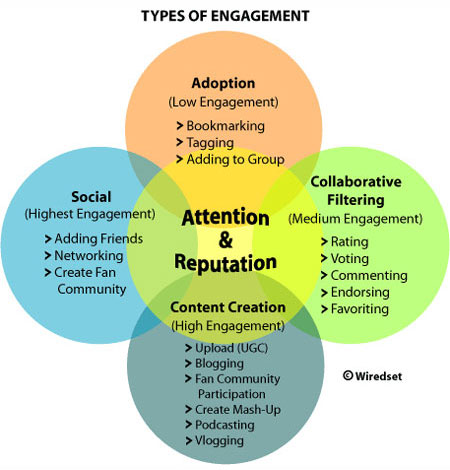

Ghuneim holds a US patent (8,271,429) for a “System and method for collecting and processing data.” He has authored papers, including “Terms of Engagement: Measuring the Active Consumer” (2008), and produced the documentary “Pixies: Gouge.” Mark Ghuneim continues to work as an angel investor, mentor, consultant, and creator, and he remains actively involved in privacy activism and art curation. His career is driven by the philosophy of “evolve or perish,” reflecting his commitment to continuous innovation and positive change. Mark Ghuneim | Evolve or Perish.

Surveillance Index Edition One is a collection of one hundred books related to surveillance photography.

Pixies: Gouge Producer (IMDB)

Patents

System and method for collecting and processing data US Patent 8,271,429 Cited by 63

Books

- “DIGIPEDIA: The Basic Guide to Digital Marketing and…” (2019) by Deepa Sayal.

- “Friends, Followers and the Future: How Social Media are…” (2012) by Rory O’Connor.

- “Buyographics: How Demographic and Economic Changes Will…” (2016) by M. Carmichael.

- “Encyclopedia of Social Media and Politics” (2013) by Kerric Harvey.

- “I Want My MTV: The Uncensored Story of the Music Video…” (2012) by Rob Tannenbaum and Craig Marks.

- “Appetite for Self-Destruction: The Spectacular Crash of the…” (2009) by Steve Knopper.

- “Connected Viewing: Selling, Streaming, & Sharing Media in…” (2013) by Jennifer Holt and Kevin Sanson.

- “Social TV: How Marketers Can Reach and Engage Audiences by…” (2012) by Mike Proulx and Stacey Shepatin.

- “Advertising Promotion and Other Aspects of Integrated…” (2012) by Terence A. Shimp and J. Craig Andrews.

- “A Twitter Year: 365 Days in 140 Characters” (2011) by Kate Bussmann.

- “The Business of Media Distribution: Monetizing Film, TV, and…” (2019) by Jeffrey C. Ulin.

- “All These Things That I’ve Done: My Insane, Improbable Rock Life” (2016) by Matt Pinfield and Mitchell Cohen.

- “Music Industry and the Internet: New Strategies for Retail,…” (1997).

- “Online Access – Volume 10” (1995).

- “Business Week – Issues 3949-3957” (2005).

- “Broadcasting & Cable – Volume 130” (2000).

- “LexisNexis Corporate Affiliations” (2007).

- “Directory of Corporate Affiliations – Volumes 3-4” (1995).

- “Hospitality – Issues 9-16” (2008).

- “NewMedia – Volume 6, Issues 3-16” (1996).

- “The … Mix Annual Directory of Recording Industry…” (1987).

- “Interactive Music Handbook: The Definitive Guide to Internet…” (1998) by Jodi Summers and Jon Samsel.

- “Vibe – Volume 4, Issues 7-10” (1996).

- “El jazz en el agridulce blues de la vida” (2002) by Wynton Marsalis and Carl Vigeland.

- “Public, Private, Secret: On Photography and the…” (2018) by Charlotte Cotton, Marina Chao, and Pauline Vermare.

- “Entertainment e comunicazione. Target Strategie Media:…” (2012) by Sergio Cherubini and Simonetta Pattuglia.

- “Recording Industry Sourcebook” (1994).

- “Redes Sociais: A União Faz a Força!” (2020) by Davidson Scarano.

- “La révolte du pronétariat: des mass média aux média des masses” (2006) by Joël de Rosnay.

- “Surveillance Index: Edition One” (2016) by Mark Ghuneim.

Magazines

- Mark Ghuneim, has been featured in a variety of press and media outlets over the years for his contributions to the industry. His work has been highlighted in prominent publications such as Billboard, Ad Age, Variety, The Hollywood Reporter, WIRED, and others.

Mashable

Newspapers

- “NEIGHBORHOOD REPORT — NEW YORK ON LINE; Watching the Watchers” (Oct. 31, 1999)

- Description: Mark Ghuneim’s online Web site offering locations and descriptions of surveillance cameras on New York City streets described; map (New York On Line column). By Denny Lee Link to Article

- “The ‘Lost’ Finale, in Twitter Traffic” (May 24, 2010)

- Description: During the final episode of “Lost,” Twitter exploded as devotees weighed in with commentary, nostalgia and playful jabs about the show.

- By Jenna Wortham Link to Article

- “A Super Bowl Where Viewers Let Their Fingers Do the Talking” (Feb. 6, 2012)

- Description: Twitter was abuzz with comments during the big game, reaching a peak of 12,223 posts per second, with a total of about 15 million.

- By Brian Stelter Link to Article

- “‘Sharknado’ Tears Up Twitter, if Not the TV Ratings” (July 12, 2013)

- Description: The much-talked-about connection between social media chatter and television ratings didn’t entirely bear out for “Sharknado.”

- By Brian Stelter Link to Article

- “Water-Cooler Talk About the Water-Cooler Effect” (Feb. 24, 2010)

- Description: Tweets and email messages about a report on the online water-cooler effect that television executives are crediting for record ratings for big-ticket events like the Super Bowl and the Olympics.

- By Brian Stelter Link to Article

- “Twitter Buys a Referee in the Fight Over Online TV Chatter” (Aug. 28, 2013)

- Description: The social network has bought Trendrr, a research firm that recently published a study on the importance of Facebook, Twitter’s arch-rival, as an online platform for people to discuss television shows while they are airing.

- By Vindu Goel Link to Article

- “Does the ‘House of Cards’ All-You-Can-Eat Buffet Spoil Social Viewing?” (Feb. 19, 2013)

- Description: The great thing about Netflix’s “House of Cards”? You can watch it all at once. That may be the bad thing, too. How do you gather around a water cooler and talk about a moving target?

- By David Carr Link to Article

- “The Breakfast Meeting: A Loss at DreamWorks Animation, and a Cute Interspecies Rescue Was Fake” (Feb. 27, 2013)

- Description: DreamWorks suffered an earnings loss and talked about staff cuts; a heartwarming YouTube video of a pig rescuing a goat that was fodder for many news outlets was faked; and “American Idol” tries to close the feedback loop on TV hashtags.

- By Daniel E. Slotnik Link to Article

- “New TV Hit Hums Along Online, Too” (June 6, 2011)

- Description: Twitter chitchat and a team format are giving “The Voice” a boost in the ratings.

- By Brian Stelter and Bill Carter Link to Article

- “‘Lost’ Fans Suffer From Blabbermouths Online” (May 21, 2010)

- Description: For the finale, ABC is taking steps to keep online chatter from ruining the suspense across the time zones.

- By Jenna Wortham Link to Article

Audio/podcasts

Public, Private, Secret | International Center of Photography [2016-2017] Public Private Secret

Website: https://www.icp.org/exhibitions/public-private-secrethttps://www.publicprivatesecret.org/

Essay: Interview: Real-Time Media Curator Mark Ghuneim with Curator-in-Residence Charlotte Cotton

Created 7 Real-time works. Named + co-curated the show with Charlotte Cotton ^

Related Book : Public, Private, Secret: On Photography and the Configuration of Self

–

LE BAL, Paris

PERFORMING BOOKS # 1

JANUARY 10 TO 27, 2018

SURVEILLANCE INDEX Film – Mark Ghuneim

Email: “first@lastname.com”

(see below for spelling)

Twitter: @MarkGhuneim