MusicLM: Generating Music From Text

“We introduce MusicLM, a model generating high-fidelity music from text descriptions such as “a calming violin melody backed by a distorted guitar riff”. MusicLM casts the process of conditional music generation as a hierarchical sequence-to-sequence modeling task, and it generates music at 24 kHz that remains consistent over several minutes. Our experiments show that MusicLM outperforms previous systems both in audio quality and adherence to the text description. Moreover, we demonstrate that MusicLM can be conditioned on both text and a melody in that it can transform whistled and hummed melodies according to the style described in a text caption. To support future research, we publicly release MusicCaps, a dataset composed of 5.5k music-text pairs, with rich text descriptions provided by human experts.”

To get a real idea of this model check the different examples : Project page

Audio Generation From Rich Captions : The audio is generated by providing a sequence of text prompts. These influence how the model continues the semantic tokens derived from the previous caption.

Long Generation

Story Mode : The audio is generated by providing a sequence of text prompts. These influence how the model continues the semantic tokens derived from the previous caption.

Text and Melody Conditioning : By adding melody embeddings to the conditioning, we can generate music that respects the text prompt while following the provided melody.

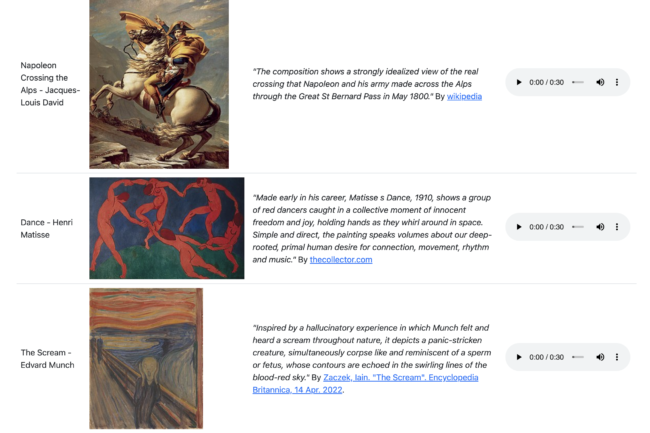

Painting Caption Conditioning (!!!)

Generation Diversity

Test the diversity of the generated samples while keeping constant the conditioning and/or the semantic tokens.